The character’s name “humpty dumpty” has high aboutness (C=22.28), but every time the string umpty occurs, it then immediately occurs again one character later (even though the string is relatively rare overall). “umpty”, incidentally, provides a good intuition for where the method fails. The highlighting doesn’t work perfectly, but it gives a decent impression, which we can use to interpret the VMs results. I’ve highlighted where phrases start and end with a string that was a word (of more than three characters) in the original text. On balance, it seems like most phrases detected start and end at a word boundary, and most complete phrases are indicative of what a local part of the text is about. Here are the top substrings for our concatenated Alice, with all whitespace removed: I couldn’t reproduce their results with their algorithm as they describe it, but I came up with something that performs similarly. Aboutness at the character levelįor their second experiment, the authors removed all whitespace from a book, and performed the analysis for all character strings, up to a particular length. I’ll finish up with some thoughts on how we can expand on this, but first, let’s look at another experiment the authors of this method did. Most of them perhaps function like nouns. That suggests that if these words refer to anything, they probably refer to concepts of some sort. More interestingly, note that most of the top words in the Alice example were nouns. If the VMs is about anything, some paerts are likely about “shedy” and about “qokeedy”. What does this tell us? Well not much, but a little. The code is available if anybody wants to try the experiment with a different transcription. If this method is worth anything, it should be invariant to any details in transcription, omitted sections, and character-level detail. I'm just looking for a large chunk of "typical" running Voynichese. This is a crude way to prepare a VMs corpus. Anything between curly brackets was replaced by a space, and any sequence of multiple special characters (!, *, %) was collapsed into one.

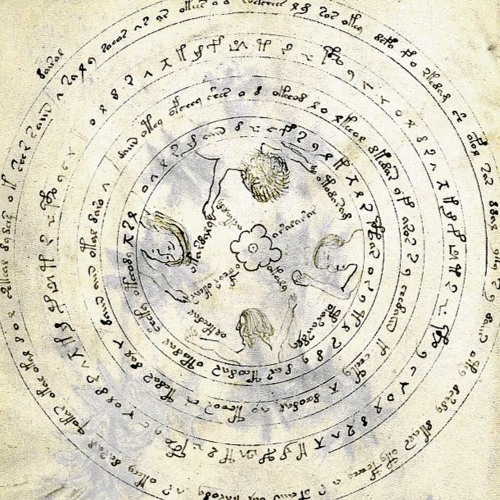

\(C\) and \(\sigma_\text\): \(C\)įor this experiment, I used the Takahashi EVA transcription. The more unlikely this is, the more aboutness we assign the word.ĭoes it work? Here are the top fifteen words for the concatenated Alice in Wonderland and Alice through the Looking Glass (together roughly the same size as the VMs): \(C\) The test then simply boils down to computing how unlikely it is to see the given clustering pattern under the assumption that the sequence was produced randomly, or to be more specific under the assumption that the sequence was a sample from a geometric distribution. For words with high aboutness, we will see the words clustering together, as if they’re particles, attracting each other. If the words are spread out randomly, we will see the occurrences of the words spread out relatively evenly along the line. Think of the book as a line, indicating the continuous string of words, where we mark each occurrence of the word of interest with a colored dot. They are the words that the text-or at least the section with many highlightsh-is about. These are, so goes the theory, the words with high aboutness. What we are interested in here, is the words for which one page contains a mass of highlights, and two pages on, there are no highlights at all. For other words, the number of highlights per page will decrease, but the expected number per page will remain the same. If the word is a common word, like “it” or “the” we will see frequent highlights,in roughly equal numbers on each page. Consider the distribution of the highlights across the pages. Imagine a book, where every occurrence of a particular words has been highlighted. In Level statistics of words: finding keywords in literary texts and symbolic sequences ( abstract, PDF), the authors provide an interesting statistical approach to unsupervised analysis of text that might hold a lot of promise for computational attacks on the VMs. Surely we would need to translate the thing first. Given that we know so little, it seems a little presumptuous to ask what it’s about. It could well be a hoax: somebody trying to make some money from an old stock of vellum by filling with enticing scribbles and trying to flog it to some gullible academic. It could be a coded text, it could be a dead or invented language. We know it was probably written in the 15th century, and that it’s probably from Italy, but that’s about it. We don’t what it is and we don’t know what it says. After at least a hundred years of research, the current state of knowledge of the VMs can best be summed up as follows: we don’t know. Most pages are richly illustrated with non-existent plants, astronomical diagrams and little bathing women. It consists of a little over two hundred pages of vellum, covered in an unknown script.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed